An overview of the whiteboard animation. For this, I had siloed myself away for a few hours to draw on a real whiteboard and subsequently redrew the mural using screen recording in Photoshop, combined later with movement in After Effects

Brytan and Karen at the top of Sierra Negra repping our McGill gear.

These creatures are marine iguanas. They live atop the rocky outcrops and cliffs across the islands, absorbing the solar heat. The white spots on their heads are salt residue that they shoot out of nostrils on their head.

More Marine Iguanas taken on Tintoreras

This whiteboard drawing shows how a volcano dies after it moves off of the mantle plume, and how new islands form as the crust moves.

This drawing is to simulate how much like a building in high wind, the tall mantle plumes will not move as the crust moves atop them.

A screenshot of the full virtual reality map setup

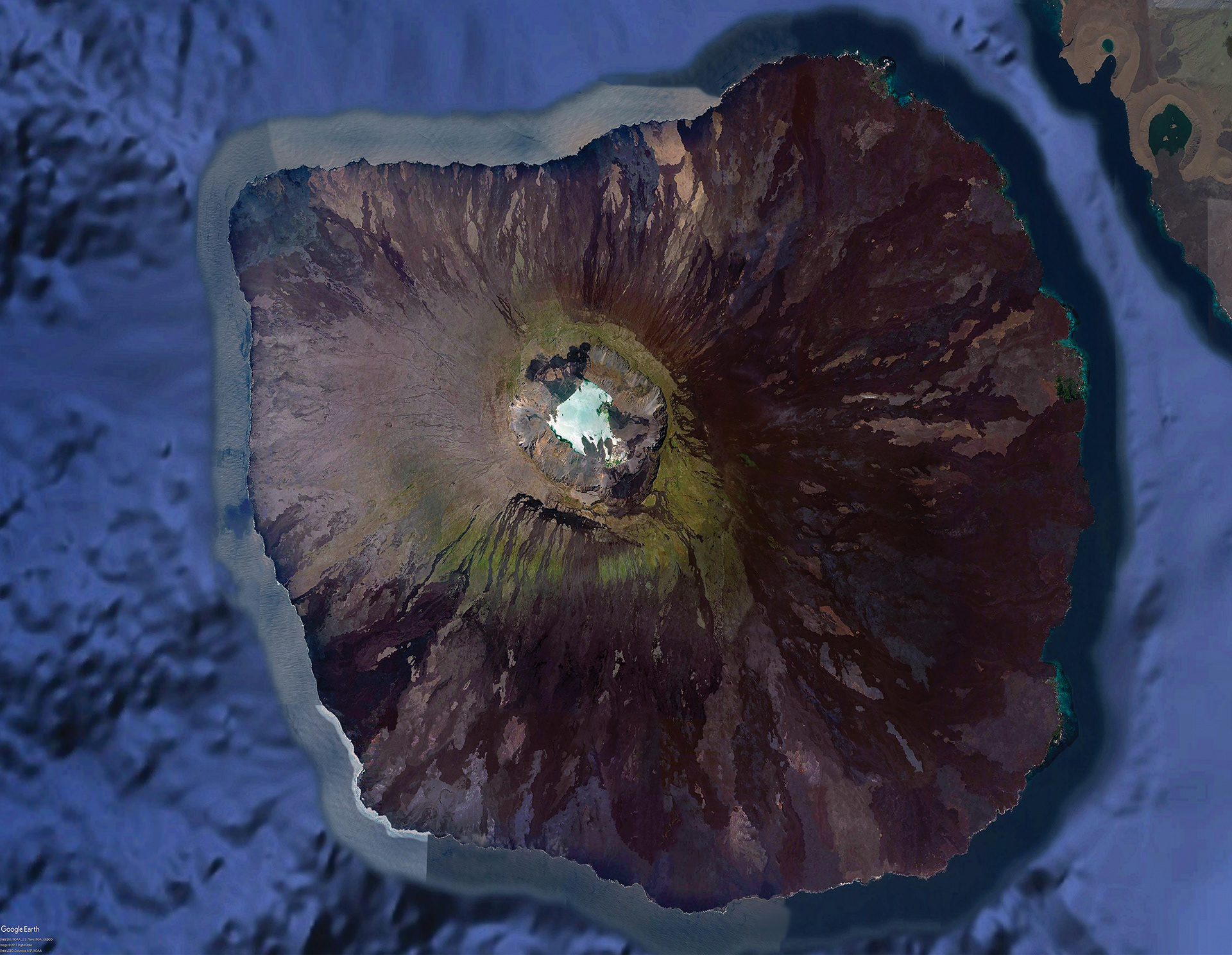

This photo of the island of Fernandina was created in Photoshop using Google Earth imagery, with a special process used to equalize the different patches that result from multiple satellite passes, as well as the presence of clouds, which are notably absent from this photo. This image, along with another set from other islands would be overplayed onto 3D models of the islands to create a lifelike model for VR with pinpoint accuracy. These photos were used as part of an NSF-funded project on Galapagos Volcanoes, as they are one of the few detailed, unobstructed images of the islands.

Pablo, our renowned guide and a scientist by trade, talks to camera on Sierra Negra on the island of Isabela

Caio Brighenti on the rim of Sierra Negra

Brytan, Caio, and Emily waiting at JFK to fly on the redeye to Guayaquil, ECUADOR

VGP in Daydream

Overview

The Virtual Galapagos Project (VGP) began as a concerted effort by a small team consisting of myself and two Colgate undergraduate students, a professor at Colgate University, and member of Colgate’s instructional design team, funded by McGill University. Our primary objective is to design a way for children around the world to learn about science while using the Galapagos Islands as motivation. Our core philosophy is that the science should be digestible, without being presented as overly simplistic, and that students should be able to choose their own path, thus taking agency in their learning. We are currently developing a pilot of this program in the form of a 3D application for computers as well as mobile devices featuring virtual reality elements.

This project began in the summer of 2017, when I spent 10 weeks on the Colgate University campus as a Public Policy Fellow and Labs Without Borders Fellow on behalf of McGill University, building the pilot phase of what would become a much larger, long-term project. This included a 10-day trip to the Galapagos, where we collected thousands of videos and photos, while interviewing locals, park guides, and scientists to truly immerse our viewers in the mind of a scientist.

Visual Design

My expertise came from my background in filmmaking, action photography, and video editing. The VGP was filmed using an array of tools including two GoPro OMNI VR camera rigs, a Kolor Abyss Underwater VR rig, a Sony A7R II, and a Canon 6D. My primary video editing software was Adobe Premier Pro, with primary colour correction done on Blackmagic Design's DaVinci Resolve control surface. Through this experience, I gained great familiarity working with 360° video and the nuances of stitching six different shots together into a single 360° shot using GoPro's VR editing suite of Autopano Video Pro and Autopano Giga. We also utilized Garden Gnome's PanoTour Pro 2.

Illustrations were done first by hand and then rerecorded using a screen recorder on the GPU, primarily using Adobe Photoshop, as my skillset was built in that program; I often used Adobe Dynamic Link to transition into Adobe After Effects. The illustrations were done using a set of 4K monitors in combination with a pair of Intuous and Cintiq tablets from Wacom.

Our first VR modules made frequent use of two main types of 3D models: geography-focused and interface-focused models. Geographic models were based on GIS data obtained from the USGIS, the US Navy, and other sources, pieced together in 3DS Max by my mentor and director of the VisLab, Joe Eakin, and myself. The models for the UI/UX were designed by my colleague Desmond Tuiyot, who adapted features from his larger reconstruction of the ancient Mesoamerica city of Teotihuacán, and assisted me in learning 3DS Max and Maya, adapting my prior experience within the Autodesk suite.

Recently, I have been experimenting with ArcGIS's StoryMaps map tiling library (originally from Esri) which provides a beautiful map-focused storytelling interface for creating interactive, web-based geotours; and Google Earth Studio, an animation platform for educators, researchers, and journalists to combine location data and KML overlays, petabytes of beautiful visual data from Google Earth, and 3D tracking data for After Effects, to tell animated stories about the planet and its people. You can read more about the Google Earth Studio here.

Audio Design

I took over audio design for a month in August 2017 after one of our colleagues regrettably felt he could not produce the results we needed. Over a weekend, I learned Adobe Audition and began cleaning and logging audio clips and interviews, later transitioning into the AVID Pro Tools environment once construction finished on our audio editing bay prior to the Fall term, and the editing surface was installed.

While I was intrigued by the prospect of using binaural microphones, we ultimately decided against it for the Galapagos trip, as we were unclear the applicability of true binaural tracks in a spatial audio space. Ultimately our spatial immersion was created using a combination of Unity3D and inspiration and assistance from the Facebook 360 Developer group community and Facebook's audio editing suite, Facebook 360 Spatial Workstation.

Audio was recorded using a RØDE Stereo VideoMic, and a pair of Zoom H5 recorders. I gained valuable experience monitoring levels, which I hadn't had to do up until then.

Technical Design

The VGP framework was built upon original virtual reality mechanisms we developed in the Unity3D engine using C# and JavaScript. This effort was led primarily by my colleague (and rising sports analytics star) Caio Brighenti.

In 2018, we entered into conversations with Lev Horodyskyj, a curriculum designer at Arizona State University. Horodyskyj and his team at ASU teach geology in remote locations using an ASU-developed system called SolarSPELL: Solar Powered Educational Learning Library. Designers attach MicroSD chips full of educational media to Raspberry Pi computer CPUs and make the library accessible via mobile phone. No Internet required.

To make the program fit onto a SolarSPELL, as of 2019, we decided to shift to a web-based platform, particularly because our design plan was headlined with accessibility as a top priority.

Present

At this point, the project has moved into a more accessible format with one digital module currently up for access purposes, though the page does not reflect our most recent version — due to restrictions beyond our control, it is confined to internal testing. This live version of Colgate Virtual Galapagos is available at http://virtualgalapagos.colgate.edu. We encourage those interested to check back in later or contact us as we update the site throughout the summer and fall.

Acknowledgements

This project would not have been possible without the help of a number of select partners, whose continued support has allowed us to hire more students, purchase state-of-the-art equipment, and travel to these remote areas and capture them for the world to see:

McGill University Lab Without Borders

McGill University Office of Science and Society (OSS)

Mr. and Mrs. Stephen and Jane Savidant

Colgate University Geology Department

Colgate University Natural Sciences and Mathematics (NASC) Department

Mr. Robert H.N. Ho, CM OBC

Arizona State University

All the scientists whose materials and/or interviews were used with their permissions